Right now, most people's AI experience amounts to some time spent with ChatGPT and a general sense of what these tools can do. That's a starting point, not a skill set. And in workplaces where data security matters, and the pace of change is increasing, "I've used it a bit" isn't going to be enough.

That's where certification changes the conversation.

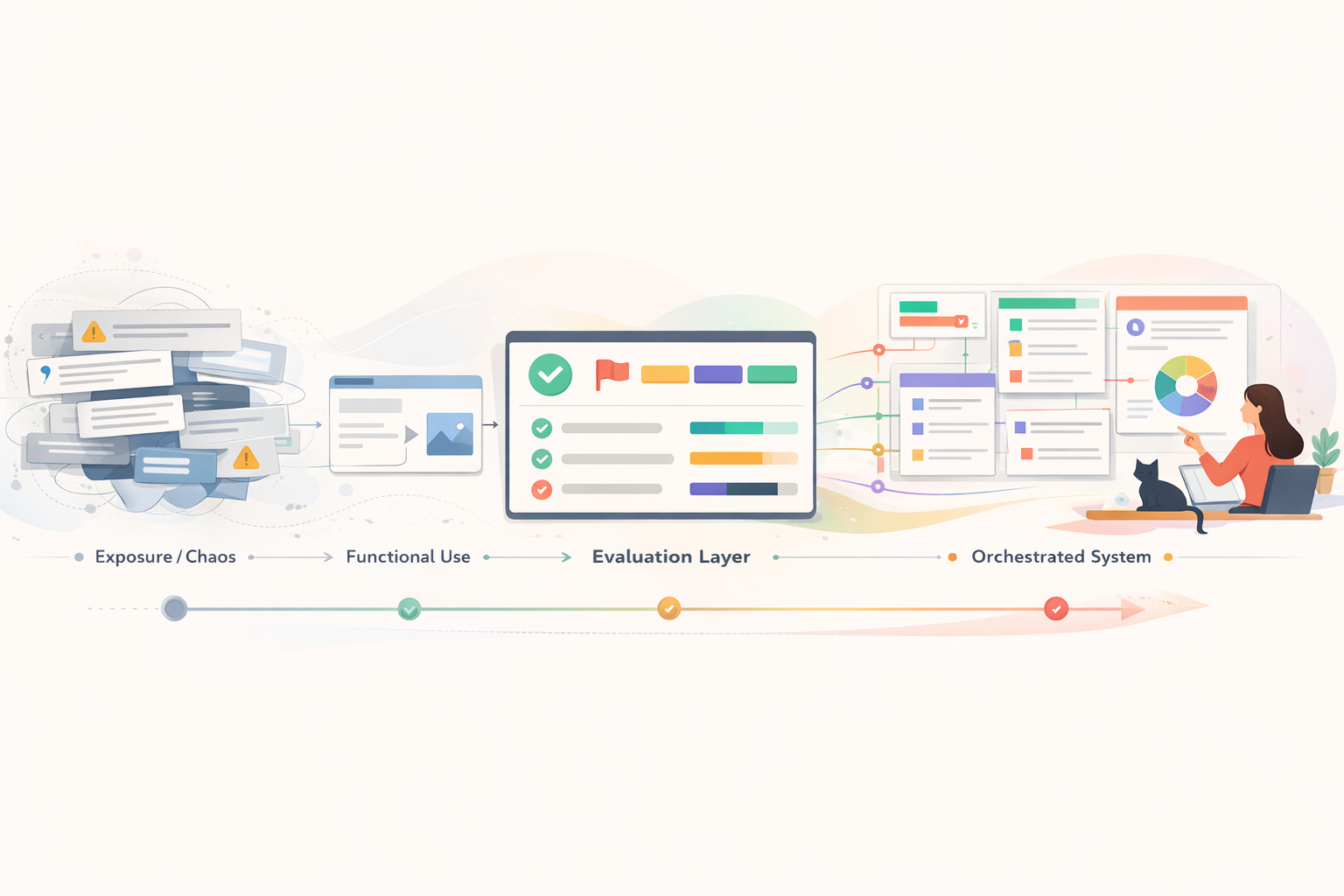

The difference between exposure and competence

There's a meaningful gap between someone who has experimented with AI tools and someone who has been trained to use them properly. The experimenter may know how to get a passable answer from a prompt. The trained professional knows how to evaluate whether that answer is reliable, what data is safe to include, how to design a workflow that's repeatable, and when to stop and apply human judgement instead.

That gap matters everywhere, but it matters most in organizations with obligations. Government departments handling citizen data. Health and social services agencies managing case files. Non-profits entrusted with vulnerable populations' information. Regulated industries where a data handling mistake isn't just embarrassing; it's a compliance violation.

In organizations with public trust obligations, AI competence isn't optional. It's a duty of care.

What certification actually proves

A recognized AI certification, like the credential pathway through the AI Learning Institute of Canada, isn't a badge for completing a webinar. It's evidence that someone has worked through a structured learning path covering specific, verifiable competencies:

- Security and data safety. What can and can't be shared with AI tools. How to evaluate a tool's data handling policies. How to design workflows that protect sensitive information by default.

- Effective prompting and evaluation. How to write prompts that produce useful, accurate results, and how to critically assess what comes back, including recognizing when the output is wrong or misleading.

- Applied workflow design. How to identify where AI adds genuine value in your actual work, and how to build processes that are repeatable, documented, and auditable.

- Ethical use and limitations. Understanding what AI tools cannot do, where human oversight is non-negotiable, and how to maintain accountability in AI-assisted decisions.

These aren't abstract concepts. They're the practical skills that determine whether someone uses AI responsibly or recklessly. And a certification is how your employer, or your next employer, knows you've actually developed them.

Why this matters most in public and regulated organizations

If you work in government, healthcare, education, social services, or any sector where privacy legislation governs how you handle information, AI adoption comes with higher stakes. Your organization can't just encourage people to "try AI." It needs to know that the people using these tools understand the boundaries.

Consider what's at stake:

- A caseworker who pastes client details into an AI tool without understanding data residency has just created a privacy breach.

- A government analyst who uses AI-generated statistics in a public report without verifying the output has put their department's credibility at risk.

- An HR professional who uses AI to screen resumes without understanding algorithmic bias has introduced legal exposure into the hiring process.

These aren't hypothetical risks. They're the kinds of mistakes that happen when people have access to powerful tools without structured training. Certification is how organizations demonstrate that their people have been trained. Not just told to be careful, but actually equipped with the knowledge to work safely.

Certification doesn't just protect your career. In data-sensitive environments, it protects the people your organization serves.

Provable skills in a market that's guessing

From a career perspective, the AI skills landscape right now is the Wild West. Everyone claims proficiency. Few can demonstrate it. Hiring panels are trying to evaluate candidates' AI capabilities with no reliable standard, and job seekers are listing "AI" on their resumes without any way to substantiate what that means.

A recognized certification cuts through that ambiguity. It tells an employer: this person has been assessed against a defined standard. They didn't just watch tutorials. They worked through a structured curriculum, applied skills to real scenarios, and met the requirements of a credentialing body.

For people in public sector roles or regulated industries, this is especially valuable. These organizations are accustomed to professional designations like CPHR, CPA, and PMP. An AI certification from a recognized institution fits into that same framework of verifiable professional competence. It's language that hiring panels, HR departments, and compliance officers already understand.

What the learning path looks like

The certification pathway through the AI Learning Institute of Canada is structured in progressive levels. It doesn't assume you start with technical skills. It starts with the foundations, including security, data safety, and responsible use, then builds toward applied, domain-specific skills.

As a certified AILIC coach, I work with individuals through this pathway one-on-one. That means the learning isn't generic. We use your actual workflows, your real documents, and the specific context of your role. A program coordinator at a non-profit has different needs than a policy analyst in government, and the coaching reflects that.

The typical path covers four to eight sessions, at your own pace. By the end, you don't just have a credential. You have a set of skills you're already using in your work, a clear understanding of what's safe and what isn't, and the confidence to keep building from there.

For the individual: career insurance

AI skills are rapidly moving from "nice to have" to "expected." The professionals who will be best positioned aren't the ones who waited to see what happened. They're the ones who invested in structured learning early and can prove it.

A certification is career insurance. It says: I took this seriously. I learned it properly. I can be trusted with these tools. In a competitive job market, in a world where every second resume now mentions AI, that proof matters more than any self-reported claim.

For the employer: risk reduction

If you're a manager or director in an organization that handles sensitive data, ask yourself: would you rather your team learn AI through trial and error, or through a structured program that covers security, ethics, and practical application from the start?

Investing in certification for your team isn't just professional development. It's risk management. It's the ability to demonstrate to auditors, to leadership, and to the public that your people were trained properly, verifiably, and with security as the foundation.

In five years, "we didn't train our people" won't be an acceptable explanation for an AI-related data incident. The time to build those provable skills is now.

If you're ready to start building a credential that carries real weight, one that proves your skills to the organizations that need to see proof, coaching can get you there. We'll work through the AILIC pathway together, at your pace, with your work as the foundation.